Here's what we'll cover

Here's what we'll cover

Here's what we'll cover

Ro’s first broadcast Super Bowl ad was a major undertaking for Ro as a business and a brand. So, why do we need engineers to work on a TV commercial? Sadly, we did not, and we’re still jealous we could not attend the studio filming with Serena. However, we did need engineers, alongside the rest of our tech org, to tackle a major challenge behind the scenes to prepare for our Super Bowl debut - making sure our website and onboarding flows were ready to handle the surge of traffic that would come with the event.

You will probably be fully unsurprised to learn that a lot of people watch the Super Bowl - of the 30 most watched TV broadcasts of all time in the US, 27 are Super Bowls. Those viewers aren’t just watching passively - they’re actively engaging with brands who advertise. Internet traffic trends for sites and brands mentioned during Super Bowl ads can see traffic thousands of times higher than their daily baseline. For companies who have their system costs, rate limits, database sizes, and connection pools tuned for their regular traffic patterns, this type of surge can quickly exhaust resources and topple a system over - and because the traffic comes so quickly, the spike will come and go before any weary on-call engineer can scale up infrastructure resources to meet the moment.

Ro’s platform is no exception to that pattern. Ro has offered healthcare at scale for over 8 years since the company was founded, with our platform able to help more patients daily than some physical doctors’ offices could in a year. That is only possible through our continuous investment in technology to collect and structure health data, streamline provider workflows, efficiently route requests, and give patients all the tools they need to be in the driver’s seat of their treatment. But there is scale, and then there is capital-S Scale. Our day to day traffic patterns are much more distributed, and our core platform was built and operates accordingly to that expected traffic. It was not built (reasonably so) to support a 10x, 100x, or more traffic spike in a period of under a minute.

There are plenty of ways to create a very simple page that can handle traffic, tell Ro’s story, and maybe collect emails for folks to sign up later. Claude could probably spin one up by the time I finish typing this sentence (though given the AI-based websites that crashed due to Super Bowl traffic this year, maybe not). Regardless - we didn’t want to just make a promise of healthcare that evening. We wanted to present our full platform to this outsized audience and let them start their healthcare journeys right at that moment. If they were ready, we needed to be ready - regardless how many folks showed up to the front door, we wanted to welcome them in. So, we were going to need a much bigger front door.

Why not just throw more hardware at it?

Ro is over 8 years old, but it is still a startup at heart - and some of our core systems and architecture still reflect that fact. That doesn’t mean we’re running on bubblegum and paperclips, but it also doesn’t mean every inch of our system is perfectly optimized and structured such that we can simply increase database and service sizes and call it a day. Like many rapidly-growing startups, our architecture sits in-between a consolidated backend monolith and purpose-specific microservices. As we approached our scaling challenge, we had to keep several system constraints in consideration:

Certain services did not have a “bigger boat” - Some of our older core models within our monolith were on the largest hardware available on our provider. So, while we could optimize in place, we could not meaningfully increase our hardware capacity or database connection pool on certain core APIs.

Interconnected dependency trees - For any microservice that we scaled out, we would also need to ensure that would not unintentionally create a flood of traffic to downstream services (which, in some cases, included the aforementioned core monolith).

Active affiliated medical practice - Ro’s affiliated medical practice relies on clinical tooling every hour of the day, every day of the year. Any major architectural changes would need to be made in place in a system actively operating and serving thousands every hour - which means minimal to no downtime allowed to ensure as few disruptions to clinical practice, pharmacies, and patients as possible. You know, just to keep things interesting.

Where to Start: With Patients

Where do you start when you need to scale across a broad application space? The same place as your users - or in our case, our patients. Going back to my soon-to-be-annoying front door metaphor - if you build a house with a really large kitchen and a small front door that you always lock, it really doesn’t matter how big your kitchen is - no one can get into the house to begin with. Similarly, if we put all our focus on downstream scaling in our provider tooling and user account experience but didn’t scale out the actual account creation process, we were going to have a lot of folks stuck on our front lawn.

We used our patient onboarding journey as our roadmap for where to focus scaling efforts and where we needed to take the biggest swings. We combined k6, a robust open-source load testing tool, with some of our existing user simulation patterns from our browser-based automated testing and product analytics on user behavior (click-through rates through the onboarding funnel). This gave us an in-house engine that we could use to fully mimic how patients (and therefore, APIs and traffic patterns through systems) would behave in a surge. No guessing necessary - we could simulate a Super Bowl every day in our non-production environment and look at the impact across services that powered the onboarding flow (error rates, latency, CPU utilization) within our existing observability tooling.

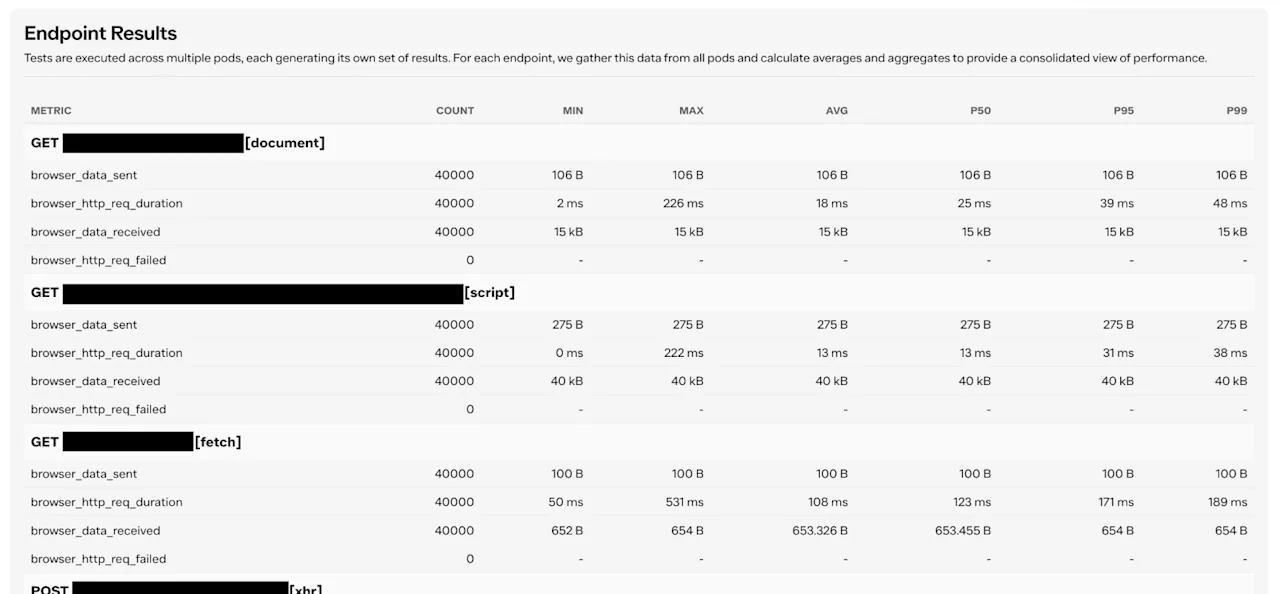

We were also able to build on top of our existing testing suite UI (our home-grown application built on top of Jenkins and Playwright for orchestrating end to end testing) that enabled us to take a magnifying glass to our daily load test runs, going endpoint-by endpoint through our patient onboarding journey to look at p95 latency and failure rates.

(This is from a more recent run - our first runs were much less clean.)

While we had the ability to simulate a user journey all the way through to provider review and treatment flows, we opted to focus only on the steps of onboarding up to submission. Why stop there? Because the submission of our Online Visit (or “OV” - our primary onboarding flow for the Body Program) is the handoff point between patients and Ro. Once Ro has control of the next steps, we can intentionally pace out what happens next - queue information to be processed at a pace that is much more in line with our normal day-to-day volume. This “flattening of the curve” moment would allow us to focus our largest technical innovations for scale where patients would be driving huge traffic spikes, and safeguard downstream systems so they would never even know that we were experiencing such anomalous volume. And since patients had already provided all of their information and had a clear picture of next steps, nothing would feel like a “line” to them - folks are through the front door, have been made welcome, and have a comfortable seat.

So that’s the secret to scaling everything - you don’t actually have to scale everything. But there was still a great deal of work to be done.

The most performant API calls are the ones that don’t exist.

Exponential technical scale doesn’t come from database query optimization - it comes from a fundamental change in how the underlying technology is built. With our narrowed focus on the Ro onboarding experience, we picked apart and re-questioned every assumption of our existing architecture. The benefit of our load testing tooling being a user simulation versus being written as a series of HTTP-only endpoint calls is that we were able to capture every single endpoint we hit during the course of an onboarding flow - including ones we did not realize we were calling. We pulled a total of 60 distinct API calls out of our flows and broke them down into four major categories:

Essentials - things that power safe, secure, and accurate gathering of health data via Online Visit questions and answers.

Extras - experience enhancements that help smooth onboarding requirements but are not required to happen in the moment and could be time-shifted to after someone has completed their initial onboarding flow.

Configuration - settings data for copy and products that very rarely changes, if ever, that we were making live API calls for to query back from our database every time instead of treating as config in code.

Cruft - leftover calls for components we thought were deprecated or “dead code”, but still had some vestiges that were haunting the experience.

The configuration and cruft have low-lift, conceptually straightforward solves. Cruft can be deleted, and configuration can be bundled into our frontend application dependencies so it is served as a static asset from a high-availability CDN without needing to hit our APIs directly at all.

The essentials and extras were a bit trickier, as they represented a series of calls made incrementally during the Visit - some of which whose outputs served as inputs to subsequent requests. Rather than litigate these individually at first, we took a different approach to the technical design around a solution: pretend literally none of it existed, and design what we would build to serve the same purpose. No longer beholden to the biases of our previous architecture, we could identify a conceptually straightforward solution (even if it would be a higher level of effort to implement) following our new guiding principle: “Async All The Things”.

Async All The Things

The main hurdle in our current onboarding application architecture was a heavy dependency between the client and server to pass incremental data back and forth. In most cases, for the purpose that the onboarding flow was intended to serve, this actually wasn’t necessary for the core functionality - it was a remnant of how we built the original application 8 years ago. So, we flipped the script - rather than assuming all information would be collected and stored synchronously while the patient was completing their first online visit, we assumed it would all be collected and processed asynchronously.

This would allow us to:

Fully control when traffic entered our system regardless of volume of patients on the site

Batch certain incremental calls into a single database transaction

Maintain all dependencies between calls that need to be sequenced in order (e.g. calls that need an account to be created in order to have an account UUID), so we did not have to expand our overhaul to multiple downstream services

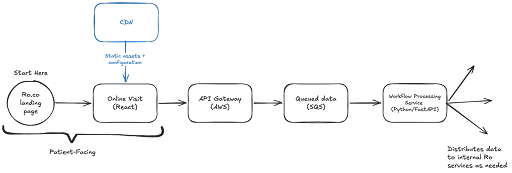

Our core architectural components would be:

A frontend application layer to collect and consolidate data and manage API call logic and any forced timeouts or retries (to prevent the experience from hanging up for a non-essential API response)

A high-throughput endpoint isolated from other architecture to collect incoming data (this is where we can queue data to flatten the curve from traffic spikes)

An application layer between user submission and the rest of Ro systems to orchestrate downstream API calls

This would give us full control over the “essential” API calls. For the “extra” calls, in addition to frontend guards to prevent any noticeable lag in the experience due to “best effort” requests, we also deployed independent AWS API Gateway endpoints to isolate those calls on the server side. This allowed us to create tiers of priority traffic and ensure “nice to have” features did not unintentionally take down critical systems with volume under high load.

Once we re-classified the 60+ API calls from our original load tests under this new architectural paradigm, we were left with only 2 essential calls - 1 GET to our CDN to retrieve the frontend application assets, and 1 POST to submit the final Online Visit at the end of the onboarding flow. This new architectural structure, and subsequent ~97% reduction in blocking synchronous API calls, was the fundamental change in our underlying technology that we needed to unlock exponential scale.

#spoilers - It Worked

If you were one of the many, many, many folks who visited ro.co, googled “Ro Serena”, or downloaded the Ro app during the Super Bowl, you would not have noticed any difference between your experience that evening and any other normal business day at Ro - and that was the point. Over the course of about five months, our team quietly replaced everything behind the scenes with an entirely different architectural paradigm.

The impact on our downstream systems was hugely visible only because it was barely noticeable - our core monolith maintaining a flat ~300ms latency and barely 1.5x normal query volume, while we were seeing 20-30x traffic to our onboarding experiences. Our affiliated clinical practice was operating as normal. In our previous architecture, this traffic would have ground multiple systems to a halt. It was, quite frankly, a very dull situation room, monitoring charts that mostly did not move - to the point that one engineer called it “the most boring big event I've ever taken part in.” But no one was complaining, because when it comes to system stability at scale - boring is the gold standard.

Even though the original push for our foundational overhaul was a specific traffic spike, our design principles have also opened massive headroom across all of our systems for sustained increased daily patient traffic volume in other areas of their ongoing healthcare journey. The “async all the things” approach also added bonus resiliency into the platform for folks on slower/more unreliable network connections, as well as gave us some more buffer for our own internal system maintenance. Just for fun (don’t judge our definition of fun), we ran a simulation in a lower environment where we just… turned off our core backend. Between our isolated submission endpoint and our async paradigms, we still ran a successful load test that got all simulated users through to onboarding completion, with their information on hold in an internal queue to be processed when the backend came back online. Very few systems can claim to be offline and online at the same time - but we are now one of them.

In our next post in this series, we’ll do a deep dive into the specific nuts and bolts behind our frontend framework that allowed us to incrementally release our new architecture - stay tuned!